24.11.2025

GPT-OSS 120B on AI Cube Pro: Run OpenAI's Open-Source Model Locally

With GPT-OSS 120B, OpenAI released their first open-weight model since GPT-2 in August 2025 – and it's impressive. The model achieves near o4-mini performance but...

When choosing the right server infrastructure for artificial intelligence, the distinction between training and inference is crucial.

While training AI models requires enormous computing resources over long periods of time, inference – i.e. the practical use of trained models – requires above all fast response times and efficient throughput.

The right decision can save significant costs while optimizing the performance of your AI applications.

Powerful Hardware for Model Development

A training server is designed for the computationally intensive task of machine learning training. Here, neural networks are fed with large amounts of data in order to recognize and learn patterns.

The training process can take days to weeks and requires maximum computing power to optimize model parameters.

48 GB+ for large models and batch processing

TFLOPS and Tensor Cores for faster training runs

128 GB+ RAM for large datasets

NVMe SSD for data access during training

Optimized for Fast Production Deployments

An inference server uses pre-trained models to deliver predictions and results in real time. The focus here is on speed and efficiency.

Inference requires significantly fewer resources than training, as only forward passes through the network are computed – without backpropagation or weight updates.

20-24 GB sufficient for most models

Fast response times for end users

Process many parallel requests simultaneously

Quantization and pruning for efficiency

The Most Important Differences at a Glance

| Aspect | Training Server | Inference Server |

|---|---|---|

Main Purpose | Develop & train models | Deploy models in production |

GPU Recommendation | RTX 6000 Blackwell Max-Q (96 GB) | RTX 4000 Ada (20 GB) |

VRAM Requirements | 96 GB for large models | 20-24 GB sufficient |

Computing Power | 1457 TFLOPS (Maximum) | 307 TFLOPS (Optimal) |

Time Characteristics | Hours to weeks | Milliseconds to seconds |

Monthly Cost | on request | €499.90 |

Scaling | Vertical (more power) | Horizontal (more instances) |

Workload Type | Batch processing | Request/Response |

Optimization Goal | Training speed | Latency & throughput |

Develop & train models

Deploy models in production

RTX 6000 Blackwell Max-Q (96 GB)

RTX 4000 Ada (20 GB)

96 GB for large models

20-24 GB sufficient

1457 TFLOPS (Maximum)

307 TFLOPS (Optimal)

Hours to weeks

Milliseconds to seconds

on request

€499.90

Vertical (more power)

Horizontal (more instances)

Batch processing

Request/Response

Training speed

Latency & throughput

The Right Hardware for Every Use Case

Perfect for inference and production deployments

For training and large models

Combine training and inference servers for optimal workflows: train on the Pro server and deploy on cost-effective Basic servers for production.

Answer These Questions for the Right Choice

You need maximum computing power and lots of VRAM for training new models or fine-tuning.

You use existing, pre-trained models for production applications and APIs.

Models like Llama 3.1 70B or larger require 48 GB+ VRAM, even for inference.

Most production models like Gemma 27B, DeepSeek 32B run perfectly on 20 GB.

During development, you need maximum flexibility and power for experiments.

In production, cost efficiency with consistent performance matters.

For APIs, chatbots, and interactive applications, an optimized inference server is ideal.

For non-time-critical analyses, you can leverage the power of the training server.

Start with an inference server and existing models. Fast time-to-market, low costs.

Scale horizontally with multiple inference servers for higher capacity and fault tolerance.

Combine training servers for development with multiple inference servers for production. Optimal price-performance ratio.

Training server for model development and experiments. Optional inference servers for demos and testing.

Both server types offer full control over your data. Server location Germany, GDPR compliant.

Upon request, we take care of installation, configuration, and maintenance – for both training and inference (optional).

Start with one server type and switch if needed. Models are portable.

Our team helps you select and optimize your server configuration.

Let's find the optimal server solution for your project together

Unsure which server fits your needs? Book a free consultation with our CTO and find the best solution for your AI requirements.

Or contact us directly

24.11.2025

With GPT-OSS 120B, OpenAI released their first open-weight model since GPT-2 in August 2025 – and it's impressive. The model achieves near o4-mini performance but...

09.11.2025

In times of rising cloud costs, data sovereignty challenges and vendor lock-in, the topic of local AI inference is becoming increasingly important for companies. With...

09.11.2025

More and more companies are considering running Large Language Models (LLMs) on their own hardware rather than via cloud APIs. The reasons for this are...

Whether a specific IT challenge or just an idea – we look forward to the exchange. In a brief conversation, we'll evaluate together if and how your project fits with WZ-IT.

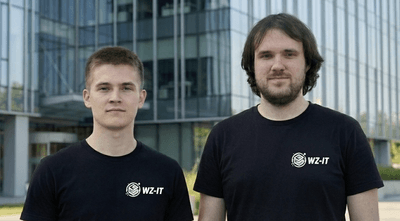

Timo Wevelsiep & Robin Zins

CEOs of WZ-IT