24.11.2025

GPT-OSS 120B on AI Cube Pro: Run OpenAI's Open-Source Model Locally

With GPT-OSS 120B, OpenAI released their first open-weight model since GPT-2 in August 2025 – and it's impressive. The model achieves near o4-mini performance but...

Large Language Models (LLM) are AI models that can understand and generate natural language. For companies, they offer enormous opportunities: from automating customer communication and intelligent document analysis to coding assistants and knowledge management.

With our LLM Hosting Germany, you can operate these powerful models in your own GDPR-compliant infrastructure – without having to pass on your sensitive data to global cloud providers.

Whether Llama 3.1, Gemma 3, DeepSeek-R1 or other open-source models – we take care of the installation, operation and optimization of your LLM infrastructure.

Full control over your AI infrastructure

Your data stays in Germany and never leaves the EU. Full GDPR compliance without compromising on functionality.

In contrast to OpenAI, AWS or Azure, you have full control: no data transfer to third parties, no training with your data, no hidden API calls.

Meet strict compliance requirements in regulated industries such as healthcare, finance or public administration.

No hidden API costs, no surprises when it comes to billing. Predictable monthly fixed costs instead of pay-per-token with cloud providers.

Dedicated GPU resources without sharing. Optimal latency for your applications without dependence on global cloud services.

Fine-tuning and customization of your models to your specific requirements. No restrictions from API limits or vendor lock-ins.

What is needed for professional LLM hosting?

Hosting Large Language Models places special demands on the infrastructure. We ensure that everything is optimally configured.

LLMs require powerful GPUs with sufficient VRAM. For Llama 3.1 70B we recommend at least 48 GB VRAM, for smaller models like Gemma 3 27B, 20 GB is sufficient. Our servers are equipped with NVIDIA RTX 4000 Ada (20 GB) or RTX 6000 Blackwell Max-Q (96 GB).

In addition to GPU memory, you need sufficient RAM (at least 64 GB) and fast NVMe storage for model files and caching. The models themselves occupy between 15 GB (7B parameters) and 150 GB (70B parameters).

For production applications with multiple users, a stable, fast network connection is essential. Our servers offer gigabit connectivity with low latency within Germany.

The complete software stack including CUDA drivers, Ollama, OpenWebUI and container orchestration is installed, configured and kept up-to-date by us.

Professional monitoring of GPU utilization, temperature management, automatic backups and security updates are part of our service.

As requirements grow, we scale your infrastructure horizontally (multiple servers) or vertically (more powerful GPUs). Load balancing between multiple instances is possible.

We take care of everything – you simply use your LLMs

From initial setup to daily operations: Our Managed LLM Hosting handles all technical aspects.

Upon request: Installation of desired LLMs (Llama, Gemma, DeepSeek, Mixtral, etc.), setup of Ollama or vLLM as model server, OpenWebUI as web interface and optional API endpoints for your applications.

We monitor your LLM infrastructure 24/7, perform system updates, optimize GPU performance and proactively respond to potential issues.

Our team supports you with model selection, integration into your applications and optimization for your specific use cases. Priority support via email and optionally phone/video.

Fixed monthly costs without hidden fees. No pay-per-token billing. Monthly cancellation. Starting from €499/month for entry-level with RTX 4000.

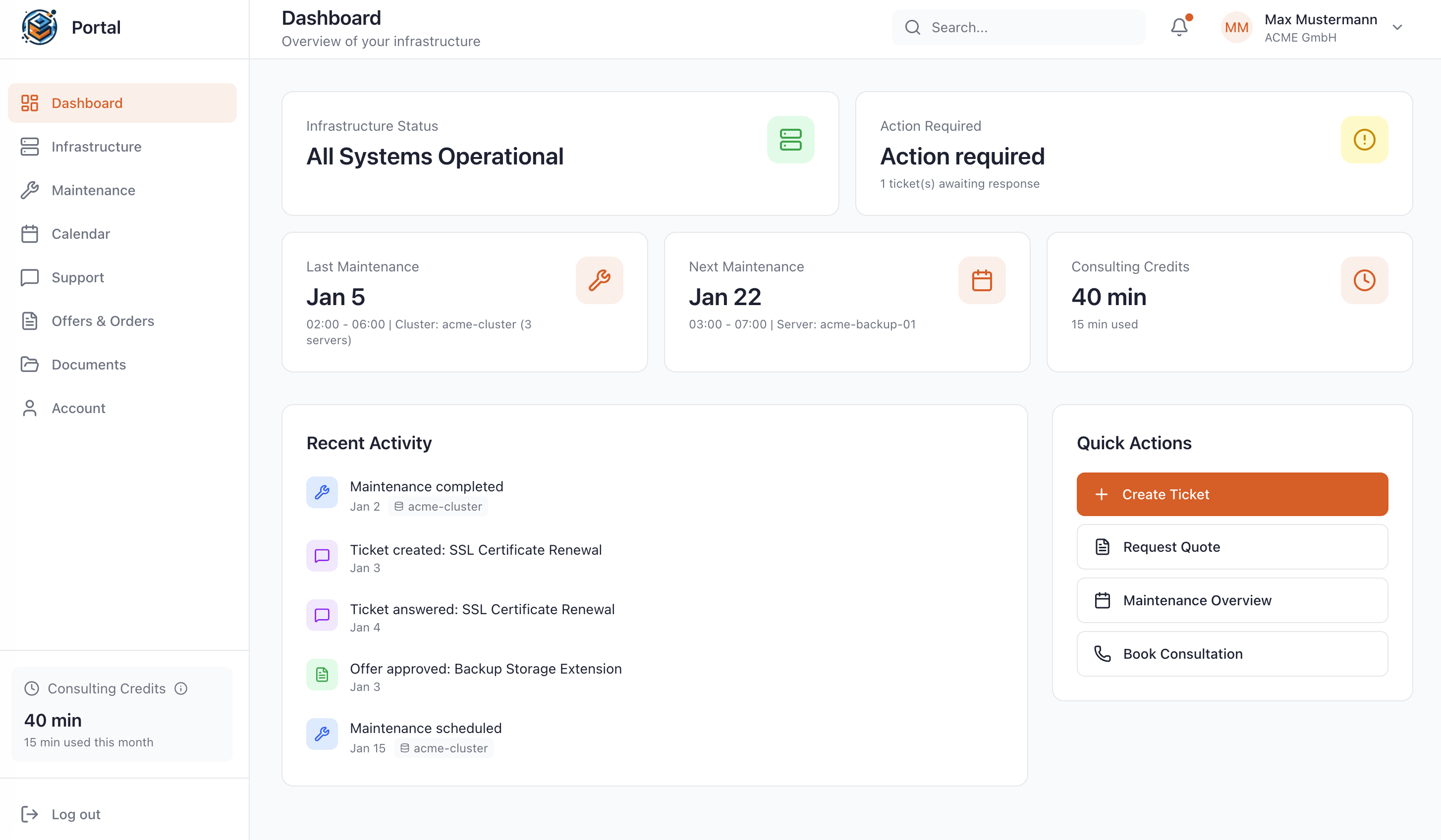

As a Managed Service customer at WZ-IT, you have access to our exclusive portal: Monitor your infrastructure in real-time, schedule maintenance, request quotes, and get direct support – all in one central location.

A selection of popular open-source LLMs

State-of-the-art from Meta. Available in 8B, 70B and 405B parameters. Excellent tool support and reasoning capabilities.

Google's most powerful model that runs on a single consumer GPU. Vision support for image analysis integrated.

Open reasoning model with performance on par with GPT-4. Shows its thinking process (chain-of-thought) and supports tool calling.

Mixture-of-experts model from Mistral AI. Offers performance of a 47B model with only 13B active parameters.

Microsoft's compact 14B model with outstanding performance for its size. Optimal for resource-efficient deployments.

Alibaba's multilingual model with strong focus on coding and mathematical reasoning. Available up to 72B parameters.

The easiest way to run LLMs locally. Perfect for development, prototyping, and small to medium production workloads.

High-performance inference engine for production workloads. Optimized for maximum throughput and minimal latency under high load.

Which framework is right for you?

We recommend Ollama for simple use cases, development and moderate load. For production applications with many concurrent users and high performance requirements, vLLM is the better choice. We're happy to help you choose!

Typical use cases and target groups

Offer your clients AI-powered services like content creation, SEO analysis or chatbots – with your own LLM infrastructure instead of expensive OpenAI API costs.

Universities, research institutions and educational organizations use their own LLMs for scientific work, studies and teaching without data privacy concerns.

SMEs in Germany use LLMs for internal knowledge bases, customer service automation, code analysis or document classification.

Connect your LLM with your own documents, wikis or databases. Employees ask questions in natural language and receive precise answers from your knowledge base.

Development teams use LLMs locally for code completion, review and documentation – without sending source code to external APIs.

Create product descriptions, marketing texts or social media content with your own LLM in your corporate language.

Analyze and classify large volumes of documents, contracts or emails automatically and GDPR-compliant.

With RAG, you connect your LLM with external knowledge sources. The model searches your documents and generates answers based on actual facts from your database. Ideal for company wikis, support databases or research archives.

Why self-hosting is often the better choice

| Feature | Self-Hosted (WZ-IT) | Cloud APIs (OpenAI, etc.) |

|---|---|---|

| Data Privacy | 100% in Germany, GDPR | Data goes to US providers |

| Costs | Fixed from €499/month | Variable token prices, often more expensive |

| Control | Full control over models | Dependency on provider |

| Customization | Fine-tuning possible anytime | Limited or expensive |

| Latency | Optimal (Germany) | Variable, depends on region |

| Availability | Guaranteed resources | Rate limits, outages possible |

Everything you need to know about LLM hosting

Harness the power of Large Language Models – securely and sovereignly

24.11.2025

With GPT-OSS 120B, OpenAI released their first open-weight model since GPT-2 in August 2025 – and it's impressive. The model achieves near o4-mini performance but...

09.11.2025

In times of rising cloud costs, data sovereignty challenges and vendor lock-in, the topic of local AI inference is becoming increasingly important for companies. With...

09.11.2025

More and more companies are considering running Large Language Models (LLMs) on their own hardware rather than via cloud APIs. The reasons for this are...

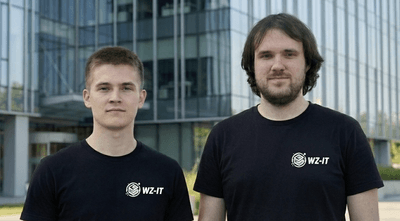

Whether a specific IT challenge or just an idea – we look forward to the exchange. In a brief conversation, we'll evaluate together if and how your project fits with WZ-IT.

Timo Wevelsiep & Robin Zins

CEOs of WZ-IT