24.11.2025

GPT-OSS 120B on AI Cube Pro: Run OpenAI's Open-Source Model Locally

With GPT-OSS 120B, OpenAI released their first open-weight model since GPT-2 in August 2025 – and it's impressive. The model achieves near o4-mini performance but...

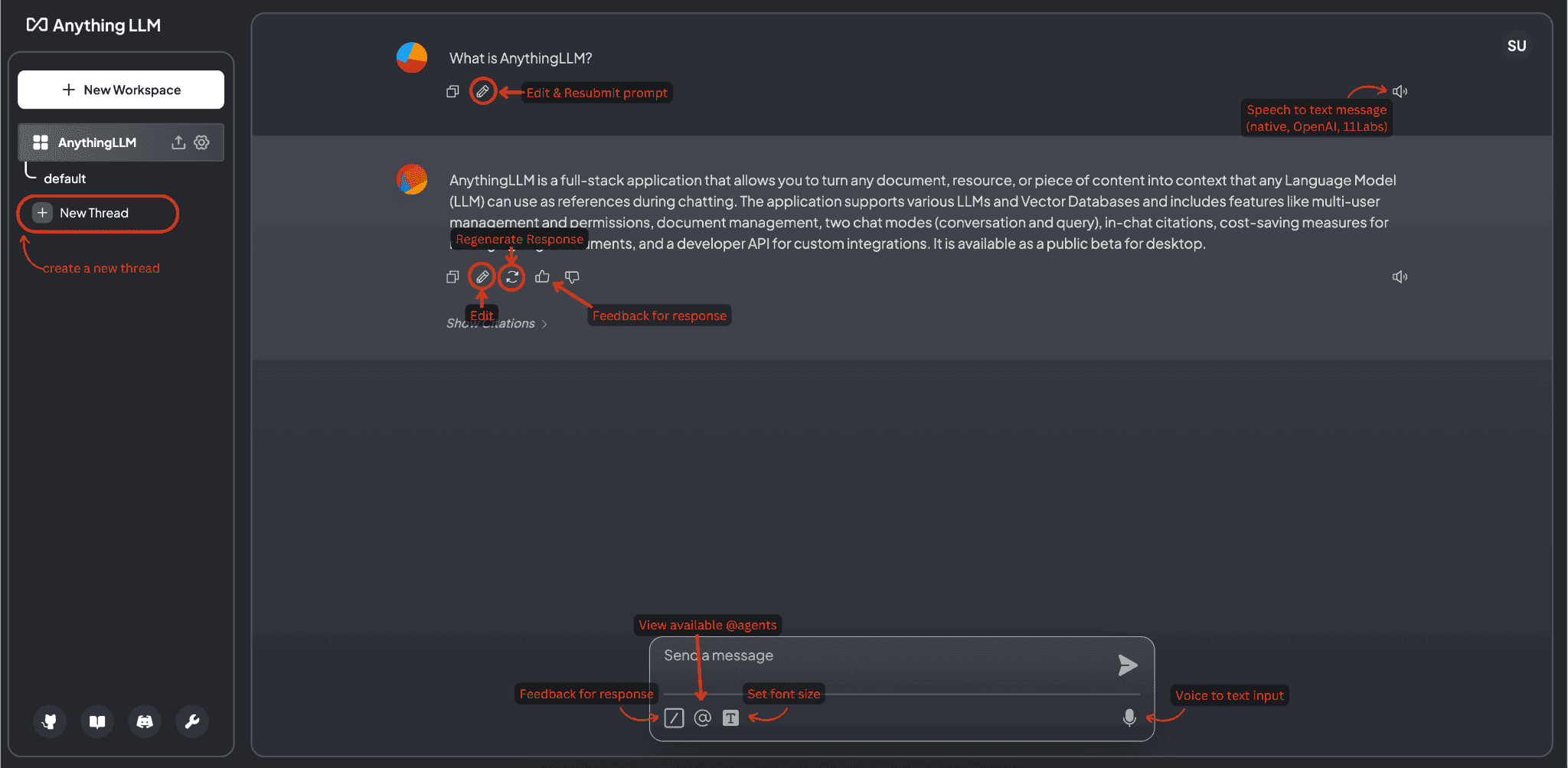

AnythingLLM is a fully self-hostable AI document chat platform that enables businesses to privately chat with their documents. As an all-in-one desktop & Docker AI application, AnythingLLM offers built-in RAG functionality, AI agents, a no-code agent builder, and much more.

AnythingLLM is a fully self-hostable AI document chat platform that enables businesses to privately chat with their documents. As an all-in-one desktop & Docker AI application, AnythingLLM offers built-in RAG functionality, AI agents, a no-code agent builder, and much more.

With support for multiple LLM providers (Ollama, OpenAI, Anthropic Claude, Gemini, and more), AnythingLLM enables secure AI usage with complete data control. The platform supports various vector databases and offers multi-user workspaces for teams.

We install, host, and operate AnythingLLM for your company - either on our secure, GDPR-compliant infrastructure in Germany or on-premise in your own environment. Enjoy the benefits of private AI without vendor lock-in.

With 24/7 monitoring, enterprise support, automated backups, and professional maintenance, we ensure maximum availability and reliable operation of your AnythingLLM instance. Including support for integrating your preferred LLM providers.

Chat with your documents (PDF, Word, TXT, CSV, and more) through an intuitive user interface. Ask questions and receive precise answers based on your content.

Utilize advanced RAG technology for contextual, precise answers. Your documents are intelligently indexed and retrieved on demand to provide relevant information.

Supports multiple LLM providers: Ollama (local), OpenAI, Anthropic Claude, Google Gemini, Azure OpenAI, LM Studio, and many more. Flexibly switch between providers based on requirements.

Fully self-hosted - your data never leaves your infrastructure. Perfect for GDPR-critical companies, government agencies, and regulated industries.

Create separate workspaces for different teams, projects, or use cases. Each workspace can have its own documents, LLM configurations, and permissions.

Supports PDF, DOCX, TXT, CSV, HTML, Markdown, and more. Automatic processing, chunking, and embedding generation for optimal RAG performance.

Granular access controls, audit logs, optional end-to-end encryption, and integration with existing security systems (SSO, LDAP, etc.).

AnythingLLM is completely open source and enables full transparency, security audits, and customizations according to your specific requirements. No vendor lock-ins.

Run AnythingLLM on-premise, in your cloud, or use our managed hosting. Full flexibility in deployment models with GDPR compliance.

See how simple and efficient AnythingLLM works in practice. From installation to productive use.

Professional installation on your infrastructure – on-premise, cloud or hybrid

In your data center

AWS, Azure, Hetzner & more

High-availability setup with comprehensive security and compliance features

anythingllm_usecase_1_desc

anythingllm_usecase_2_desc

anythingllm_usecase_3_desc

anythingllm_usecase_4_desc

anythingllm_usecase_5_desc

anythingllm_usecase_6_desc

Secure access and access control for your installation

WireGuard, NetBird or Tailscale

Keycloak, Authentik, Azure AD

TOTP, WebAuthn, YubiKey

Fail2Ban, Rate Limiting, IP Whitelisting

We set up secure VPN access to your installation – ideal for remote work and external employees.

Full-service installation with no hidden costs

AnythingLLM is the operating system for your private documents. We extend it so it not only reads documents but actively intervenes in your processes.

Integration of the chatbot into your existing software (intranet, support tool). Your app sends the question, AnythingLLM delivers the answer incl. citations.

Standard scrapers not enough? We write scripts that extract data from proprietary SQL databases or internal wikis, clean it, and load it into the vector store.

We give the AI 'hands'. Development of tool definitions allowing the LLM to execute API calls (e.g., 'Book Room X' -> API call to calendar).

How we implement AnythingLLM development in practice.

Support is overloaded with recurring questions about manuals.

Embedding an AnythingLLM bot in the helpdesk. It immediately suggests relevant passages from technical docs to the agent based on the customer question.

Knowledge becomes obsolete quickly. Manually uploading PDFs doesn't scale.

Cronjob scripts that detect changes in Sharepoint/Confluence nightly and incrementally update the vector index.

Open source enterprise-ready for productive workloads - we run your applications with highest security standards and enterprise support

Open source software for business-critical processes requires professional maintenance, continuous updates, and enterprise-grade support. With our AnythingLLM Enterprise Managed Hosting, you get the necessary infrastructure and support to reliably operate open source in production environments. Backups, SLAs, telephone support, and personal contact - so you can focus on your core business.

We also offer customized AnythingLLM Enterprise solutions for your specific requirements. Contact us for an individual quote.

From fully managed GPU servers to compact AI Cubes – we provide the ideal infrastructure for your local LLM applications.

Powerful GPU servers with dedicated hardware for compute-intensive LLM workloads. Fully managed, scalable, and optimized for maximum performance.

Compact AI workstation for local LLM inference. Perfect for office environments, with top-tier performance and absolute data sovereignty.

Good choice – we'll help you get started or with operations.

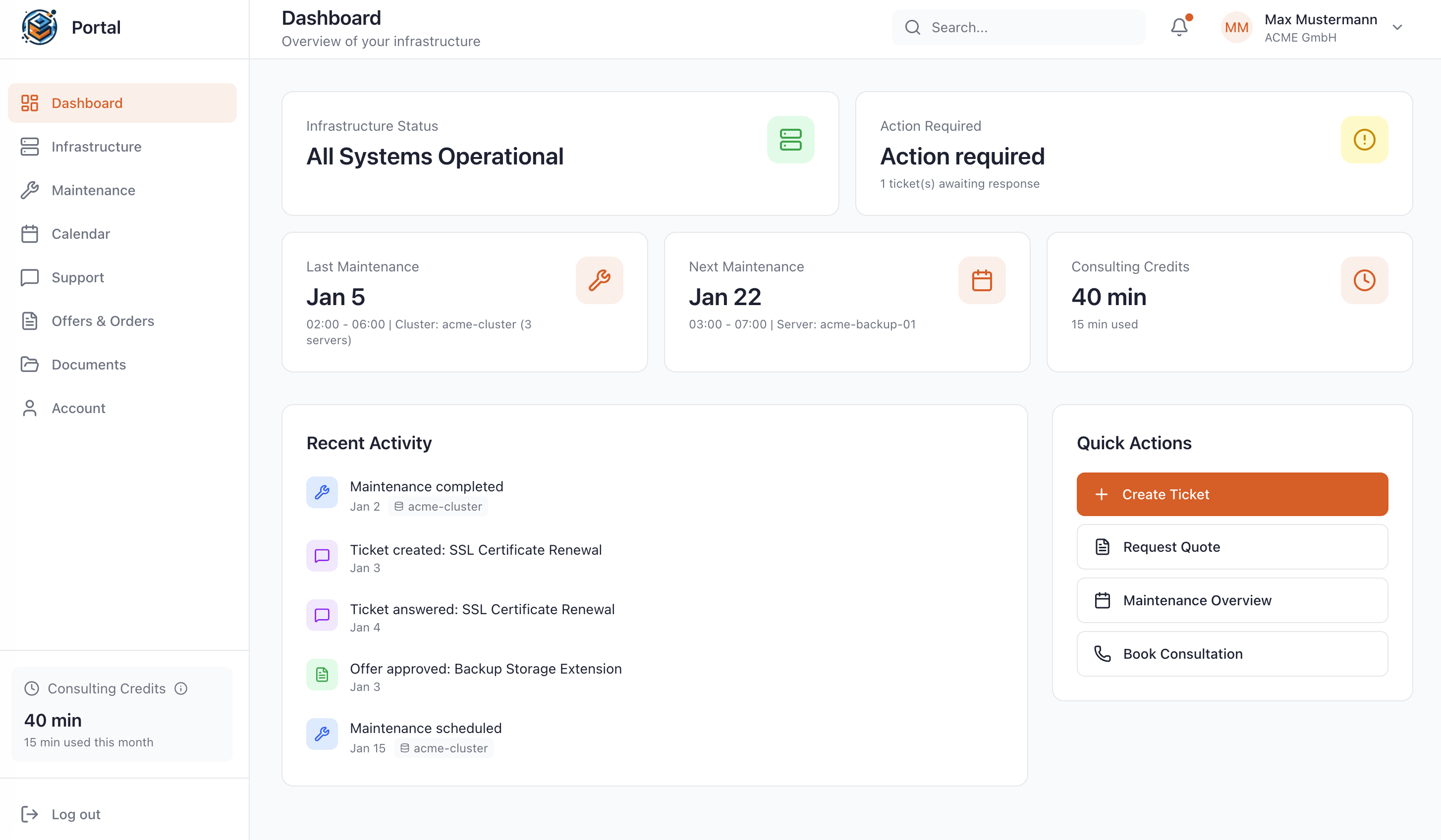

As a Managed Service customer at WZ-IT, you have access to our exclusive portal: Monitor your infrastructure in real-time, schedule maintenance, request quotes, and get direct support – all in one central location.

24.11.2025

With GPT-OSS 120B, OpenAI released their first open-weight model since GPT-2 in August 2025 – and it's impressive. The model achieves near o4-mini performance but...

09.11.2025

In times of rising cloud costs, data sovereignty challenges and vendor lock-in, the topic of local AI inference is becoming increasingly important for companies. With...

08.11.2025

The use of Large Language Models (LLMs) such as GPT-4, Claude or Llama has evolved from experimental applications to mission-critical tools in recent years. However,...

These solutions are often used together with AnythingLLM

These solutions offer similar functionalities and can be evaluated together

These solutions are direct alternatives with similar use cases

Whether a specific IT challenge or just an idea – we look forward to the exchange. In a brief conversation, we'll evaluate together if and how your project fits with WZ-IT.

Timo Wevelsiep & Robin Zins

CEOs of WZ-IT